AI means hiring more young people, not fewer.

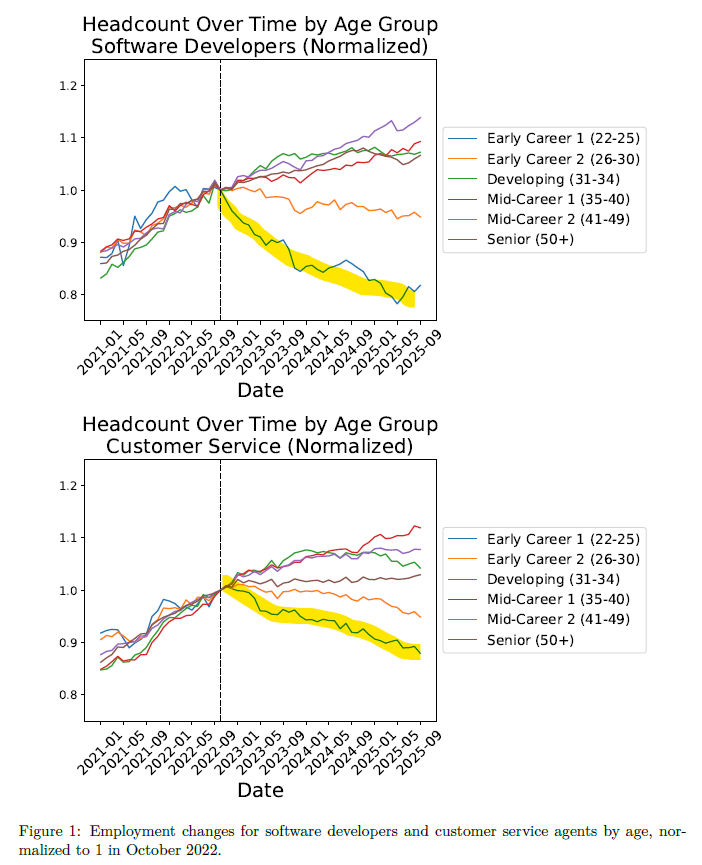

That is not the consensus view. The tasks most exposed to AI adoption overlap heavily with entry-level work, and the data is starting to reflect it. A Stanford study published last month, using ADP payroll records covering millions of workers, found that employment for 22 to 25 year olds in the most AI-exposed occupations fell roughly 16% relative to less exposed roles, controlling for firm-level shocks. Employment for experienced workers in those same occupations? Stable or growing. Firms are not cutting salaries. They are simply not hiring the next generation.

The logic follows naturally. Junior employees are disproportionately assigned the codifiable, checkable tasks that LLMs handle well. A recent HBR piece by Edmondson and Chamorro-Premuzic made the same observation: AI is eroding the bottom rungs of career ladders by automating the intellectually routine work that entry-level roles were built around.

But the logic is also wrong. And it is wrong because it inverts the problem.

I have spent the past several months building AI into my own workflow. Claude Code connected to a live data warehouse. Autonomous agents handling tasks that used to take a junior analyst half a day. I have watched the pace of innovation inside our teams accelerate to a speed we once could only dream of. The productivity gains are real. And they have convinced me that the unintentional outcome of AI adoption, a shrinking base and a protected top, has it exactly backwards.

AI does not replace judgment, creativity, or the instinct to try something no one has tried before. It replaces routine. Routine may not be what young people are best at, but routine also builds taste, wisdom, and intuition. It is the unglamorous work that turns potential into expertise. The answer is not to eliminate it. It is to pair it with tools that compress the learning curve and free up time for the work that actually develops judgment.

Young workers on our teams are the ones bringing AI fluency, fresh pattern recognition, and the willingness to break workflows designed for a pre-LLM world. They are the ones who will figure out how to deploy these tools at scale. They are the ones stress-testing what is possible. You need a critical mass of them, and you need a steady inflow, or you risk losing the energy, the ideas, and the perspective that only comes from people who have never known a world without these tools.

These dynamics make exceptional leadership the scarce resource: experienced leaders who have done the reps, who can get their hands dirty, who can convey the hard-won wisdom of that experience, but who also know how to let loose the talent and energy of the team around them. Think of it like driving a dog sled. You need to know the trail. You need to read the conditions. But the team is doing the running, and your job is to point them in the right direction and not hold them back.

Left unchecked, the unintended consequence emerges. Experienced workers use AI to absorb the tasks that would otherwise have gone to junior colleagues. That reduces direct demand for those roles, which leads to less hiring at that level. Nobody decided to hollow out the pipeline. It just happens, one automated task at a time. Follow that drift forward five years and you have plenty of managers and nobody coming up behind them.

The question is not whether AI changes hiring. It is whether we are smart enough to automate the right things and invest in the right people.

Comments, questions or things I missed? Send me a note (or hit reply) - I would love to hear from you. Thanks for reading!

Very well articulated and a must read for those wondering about the future for young people trying to enter the workforce. Rather than seeing AI as a replacement tool for hiring it needs to be embraced and used to enhance ones skills.